Think about this scenario: You see someone at a party you like; his social profile is immediately projected onto your retina—great, a 92% match. By staring at him for two seconds, you trigger a pairing protocol. He knows you want to pair, because you are now glowing slightly red in his retina screen. Then you slide your tongue over your left incisor and press gently. This makes his left incisor tingle slightly. He responds by touching it. The pairing protocol is completed.

Research and publish the best content.

Get Started for FREE

Sign up with Facebook Sign up with X

I don't have a Facebook or a X account

Already have an account: Login

Get weekly or monthly digest of all posts in your inbox: https://fmcs.digital/wim-subscribe

Curated by

Farid Mheir

Your new post is loading... Your new post is loading...

|

Curated by Farid Mheir

Get every post weekly in your inbox by registering here: http://fmcs.digital/newsletter-signup/

|

A very good review of the human computer interfaces and what the future may hold for us.

WHY THIS IS IMPORTANT

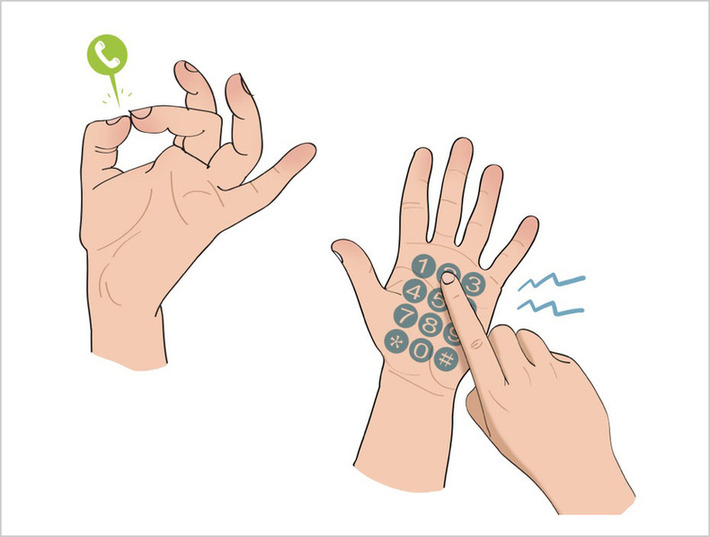

We cannot hold a mouse and keyboard in our hands to interact with physical world objects that have been digitized. Think of a warehouse employee that needs to interact with an automated shelves to fetch a specific product. Today we would use buttons on a mobile phone touchscreen. But would it not be simpler if we could simply interact with the shelves itself in ways that our body would recognize, then send appropriate commands to the devices we interact with?

In the article, the far fetched story about the couple in a bar may not be very realistic but it provides some very useful insights about how to leverage our bodies to do things we cannot with our devices today, for privacy reasons for example. Very interesting.